If you only follow AI through model names, the whole field starts to look noisier than it really is.

One model ships. Then another one ships. Then a benchmark goes up. Then a new naming scheme appears. Then everyone argues about whether the real story is reasoning, multimodality, memory, or agents.

I think the cleaner way to understand the last sixty years is to ask a simpler question:

- What is the system actually allowed to do?

That question makes the progression much easier to see.

- ELIZA could mirror language.

- Voice assistants could map recognised requests to fixed actions.

- Large language models could generate language across many tasks.

- Agents could start taking actions, checking results, and continuing.

- Multi-agent systems could split work up and coordinate it.

- The newest systems are starting to sit across software rather than inside a single app.

That's why I think the move from chatbots to operating systems is the right frame.

If you want the more visual version of this argument, The Intelligence Stack maps the same progression as an interactive guide.

The first era was imitation

The original chatbot era was mostly about imitation.

ELIZA wasn't intelligent in any serious sense. It matched patterns in a sentence and returned a scripted response. If you said you felt sad, it could notice the word and throw a question back at you. That was enough to create the illusion of conversation, and the illusion itself turned out to be powerful.

People projected understanding onto a system that had none.

That part still holds.

The ELIZA effect didn't disappear just because the models got better. We still over-attribute depth to systems that speak fluently.

Later systems such as A.L.I.C.E. became much larger and more elaborate, but the basic structure didn't really change. The machine still took input, matched it to a pattern, and returned a prepared response.

That made these systems fundamentally narrow.

They could simulate conversation, but couldn't really participate in it, as they had no memory worth speaking of, no working model of the world, no useful ability to learn through interaction, and no way to act beyond the text they returned.

Then the interface changed before the intelligence did

Voice assistants changed the feel of AI before they really changed the underlying intelligence.

Siri, Alexa, Google Assistant, and similar systems made AI feel ambient. You didn't have to type into a box anymore. You could just ask for something aloud.

Under the hood, though, these systems were still bounded by intent libraries, narrow skills, and predefined routes.

That was still a meaningful step.

Recognising that "set an alarm for 7am" is a request, extracting the time, and carrying out the action is much more useful than a chatbot that only talks. It brought AI closer to software that could actually do something.

But the limit was obvious.

These systems could execute commands they had been designed to recognise. They couldn't generalise very far beyond them. If the skill existed, they looked smart. If it didn't, the illusion broke immediately.

They were still, in practice, routers sitting on top of fixed capabilities.

Generation changed the shape of the tool

The transformer architecture changed the field because it changed the economics of intelligence.

Instead of working through language in a narrow sequential way, transformers use attention to relate words and ideas across a much larger context. That made large-scale training practical. Once that became viable, researchers found something important: capability kept rising as model size, data, and compute rose with it.

That's what opened the large language model era.

GPT-3 changed the picture because one model could now write, summarise, translate, explain, and generate code without needing a different hand-built system for each task. It wasn't pulling a response from a lookup table. It was generating one from learned patterns compressed into the model itself.

Then ChatGPT did something just as important.

It turned that capability into a public interface that ordinary people could actually use.

For a lot of people, that was the moment AI stopped feeling like a research demo and started feeling like a general-purpose cognitive tool.

Then another shift followed, as once people started prompting models to reason step by step rather than jump straight to an answer, it became obvious that some capabilities had been latent all along. Chain-of-thought prompting, and then more explicit reasoning-focused training, pushed models into a different category. They were no longer only fluent. On some tasks, they became much better at deliberate problem-solving.

That was the bridge between generative AI and agentic AI.

The real break was action

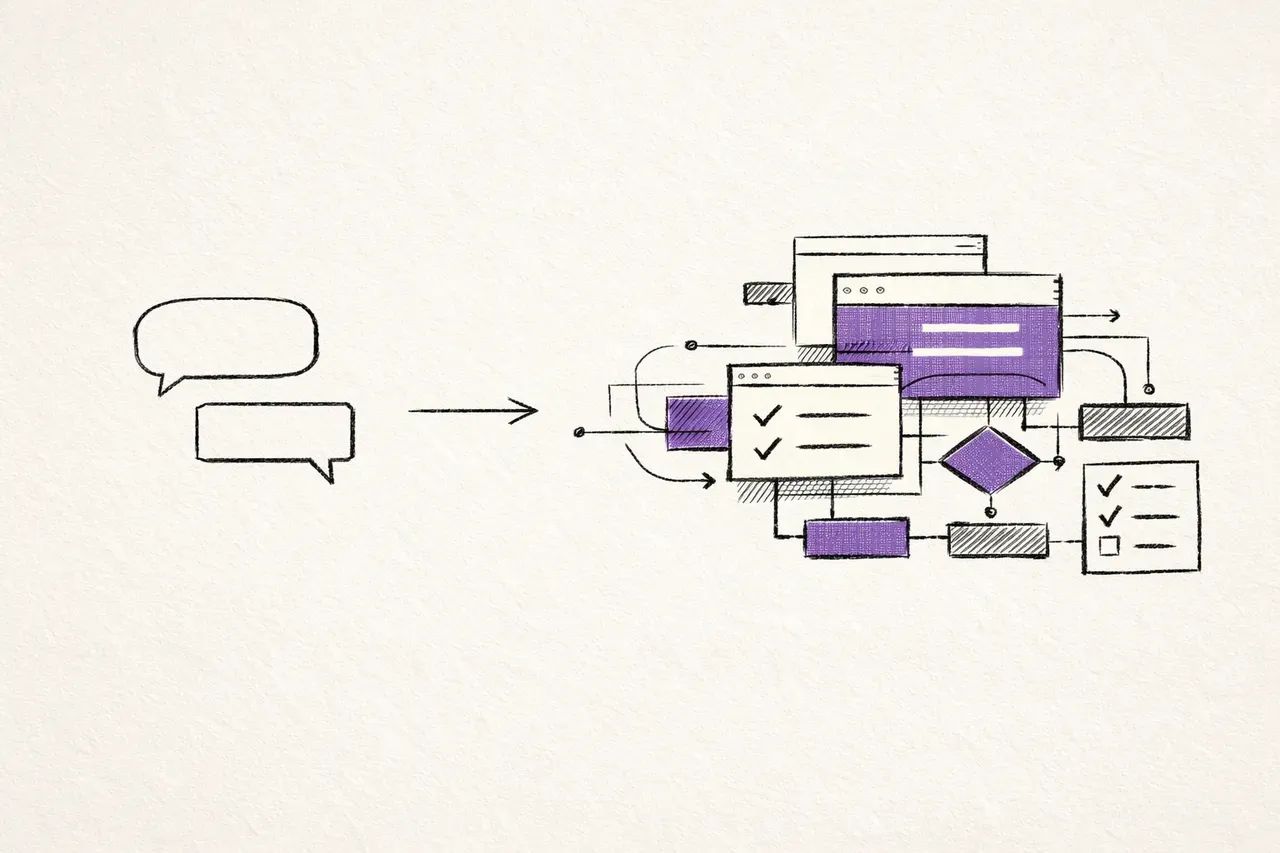

A chatbot lives inside one exchange.

You ask, it answers, and the interaction abruptly ends.

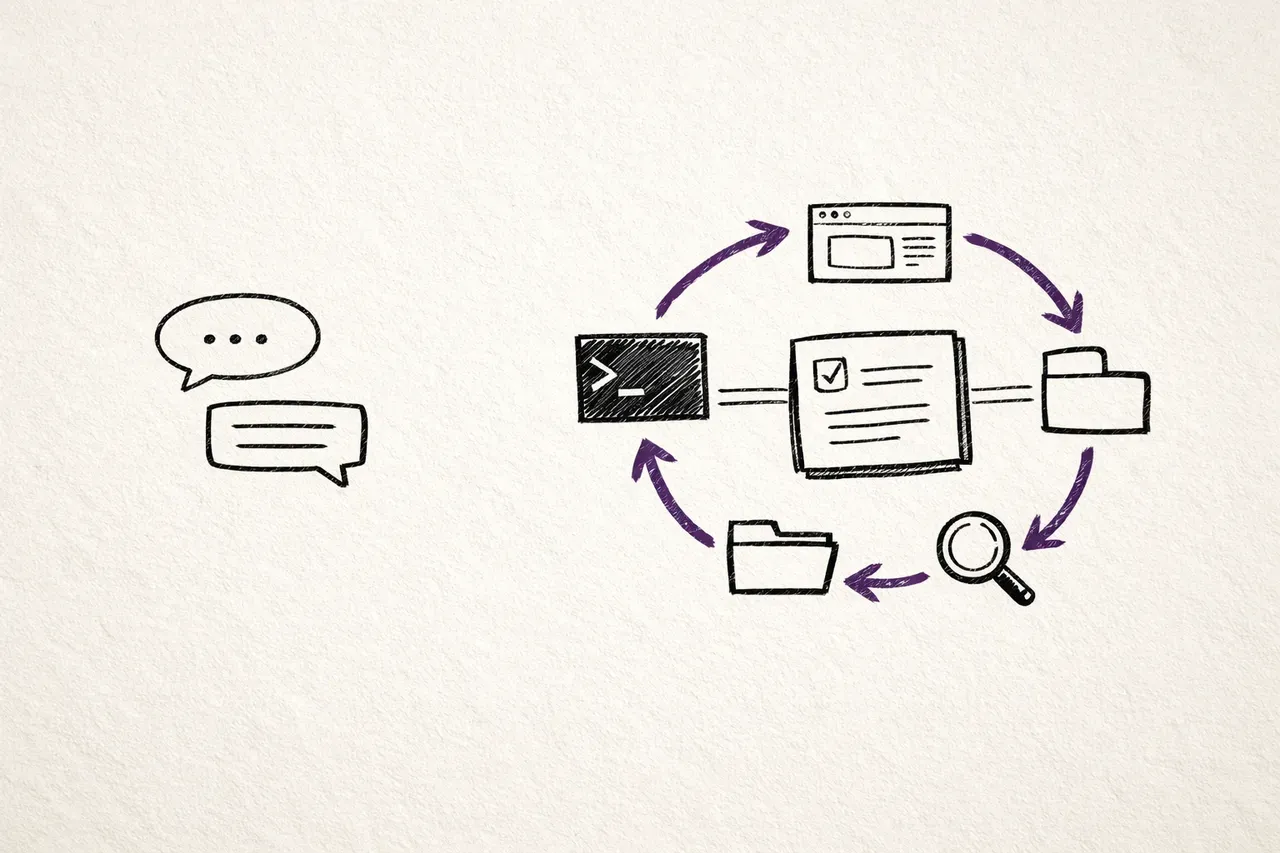

An agent works differently.

It receives a goal, decides on the next step, takes an action, observes the result, and then decides what to do next.

That loop is the important change:

- think

- act

- observe

- think again

Once you have that pattern, the model stops being only a response engine and starts becoming a decision engine.

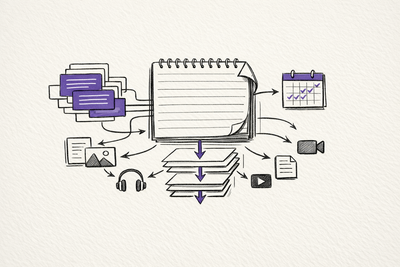

Function calling, tool use, and agent frameworks made this practical. Now the model could search the web, query a database, call an API, write a file, run code, or send a message, then use the result as input into the next step.

Early systems such as AutoGPT and BabyAGI were messy, expensive, and often unreliable, but did prove that the model can pursue a goal through a sequence of actions.

That's a much bigger shift than a slightly better chatbot.

Then the work started getting split up

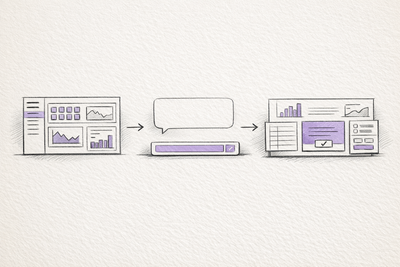

Single agents hit a practical limit very quickly.

Context is expensive. If one agent tries to hold every file, tool result, subtask, side question, and decision in one working memory, it becomes slow, brittle, and hard to steer.

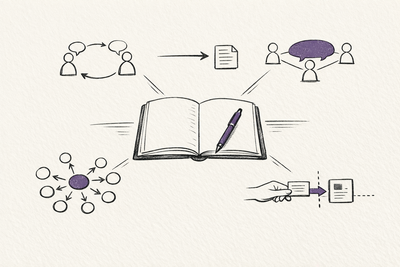

The obvious answer was the same answer human teams use.

Split the work up.

That's what multi-agent systems do.

Instead of one agent attempting everything, an orchestrator breaks the work into parts and hands those parts to more specialised subagents. One explores a codebase. Another verifies a result. Another handles a narrow research task. The useful output comes back condensed instead of dragging the full intermediate mess into one giant context window.

This is where the architecture starts to feel less like a chatbot and more like a small organisation.

Once agents can specialise, parallelise, and hand work back to one another, the topology changes. You're not dealing with one model that talks. You're dealing with a system of cooperating processes.

That's also why things like MCP (Model Context Protocol) are important, which marks an important step in standardising discussion.

Once there is a stable way for models to discover and use tools across systems, the barrier to building real agent workflows drops sharply.

The same is true of computer-use models.

An agent that can operate software through a screen, mouse, and keyboard isn't limited to applications with tidy APIs. It can work across the messier reality of the existing software world.

Why the operating-system comparison fits

I don't think "AI as an operating system" is just a flashy metaphor anymore.

A traditional operating system allocates resources, schedules processes, manages memory, controls access, and mediates between applications and hardware.

An AI-centred system is starting to do similar work at a cognitive and workflow level:

- a central reasoning layer decides what to do

- context windows act like working memory

- external memory systems hold longer-term state

- agents act like processes with specialised roles

- tool layers connect those agents to the rest of the software stack

Hence, the idea of an "AI assistant" already feels too small in some contexts.

The more ambitious systems aren't there to answer a question and disappear. They're there to remain present inside an environment, route work, maintain context, call tools, coordinate specialist agents, and keep pushing tasks toward outcomes.

That's much closer to an operating layer than a feature.

Raw intelligence isn't the whole story

There is a temptation to reduce all of this back down to "the next model will be smarter."

That'll probably keep being true.

But it's not the most useful question.

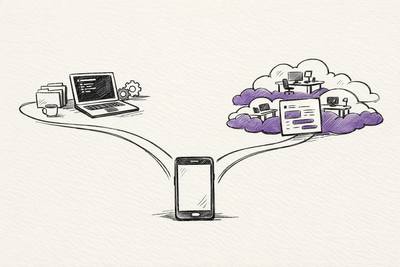

The better question is what kind of system the model is sitting inside.

A very capable model inside a thin chat interface is still just a chat interface.

A slightly less impressive model inside a strong tool loop, memory system, and coordination layer can often do more useful work.

That's why I think architecture is now the more important lens than model names alone.

The pace changes the picture too.

The gap between research and deployment has collapsed. A paper, prototype, or capability shift no longer sits around for years before it changes real workflows. The lag is often measured in months, and sometimes in weeks.

That changes how people and organisations need to think about the field.

You don't really get to wait for it to settle before deciding whether it changes your field.

What I would watch next

Three things tell you more than the weekly model leaderboard.

The first is reliability.

When agents can do meaningful work for long enough without constant babysitting, a lot of knowledge work starts to reorganise around them.

The second is tool standardisation.

When tool access becomes normal and interoperable, every software surface becomes easier for agents to operate by default.

The third is how far this same logic moves beyond the browser tab.

Once the same planning and action loops move into robotics and physical systems, the conversation stops being only about digital labour. It starts touching logistics, manufacturing, care work, transport, and the wider physical economy.

That's when the shift leaves the chat window entirely.

The real question

I don't think the most useful question is whether the next model will be smarter than the last one.

That'll keep happening.

The more useful question is what kind of system AI is becoming.

The answer, increasingly, looks like this:

- less a chatbot

- more an agent

- less a single agent

- more a coordinated system

- less a feature inside software

- more a layer that sits across software and decides how work moves

That doesn't guarantee good outcomes.

More capability can produce more leverage, but it can also produce more fragility, more concentration of power, and more badly governed automation.

The important shift is that the system is starting to decide, route, and act, rather than only answer.

That's why I think AI is moving from chatbots to operating systems.

Related on this site

- The Intelligence Stack turns this architectural shift into an interactive walkthrough from chatbots to agents, orchestration, and operating-layer AI.

- AI Era places the same story on a month-by-month public timeline of model launches, product shifts, and agent milestones.

- Evolution of LLMs gives the broader model-history view behind the move from language generation to reasoning and agent systems.